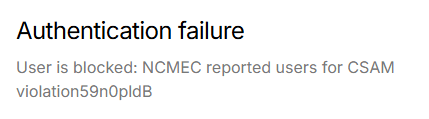

A few days ago, xAI users started complaining about an annoying issue that occurred after using Elon Musk’s chatbot Grok. According to complaints, an NCMEC CSAM block message appeared after users used Grok, logged out, and attempted to log back into their accounts. NCMEC means the National Center for Missing & Exploited Children. The issues surfaced even when users are sure they haven’t violated any xAI rules.

Users Reveal They Didn’t Touch Grok Imagine But Still Got the CSAM Block Message

After researching, we realized this issue started more than 3 days ago. Reddit reports reveal that many affected xAI users didn’t touch Grok Imagine but still experienced the annoying CSAM block message. For example, user test7747 complained that he had not done anything related to CSAM but was still flagged.

“I got this message yesterday, and I don’t know what to do. I am very scared, even though I didn’t do anything related to CSAM stuff. Is this a false alarm or what?”

Another user, who uploaded a family photo and used Grok to remove the kids from the image, also experienced this issue. The combination of “remove” and “kids” tripped the system, and the user got the same Grok CSAM block message.

Recent reports reveal that xAI has quietly removed the keywords “NCMEC” and “CSAM” from the error message. Today, users rechecked their account status and saw a plain block message: “User is blocked”. Some even noticed a simple moderation notice instead of the Grok CSAM block message.

xAI Yet to Respond to the Issue

It’s been days, but xAI has yet to respond to the issues affecting many users. So far, many users have emailed support@x.ai but have yet to get any acknowledgement or resolution to the issue.

So far, the exact cause of this issue isn’t fully clear. Some users explained that the issue surfaces because xAI’s automated safety filters overfired.

The general rules in the US allow people to report suspected CSAM to NCMEC. xAI likely designed the system to strictly stay compliant going forward. This explains why the hash-matching tools used to scan likely create false positives. To avoid wrongly accusing innocent users, xAI likely swapped the annoying keywords with plain block texts. However, this move still doesn’t unblock the genuinely affected users.

Last December, Grok generated and shared an AI image of two small girls in sexualized attire based on a prompt. This issue violated ethical standards and potentially US laws on CSAM, and the AI chatbot apologized for the error. This could be a reason why the system has been so strict regarding anything related to CSAM.

Will xAI unblock the affected users and allow them to continue usage? Will xAI support acknowledge this error and roll out a fix? We’ll continue to keep an eye out for solutions from the X team. As soon as we find anything worth sharing, you’ll be the first to get notified about it.

Add Comment